human

Human Library

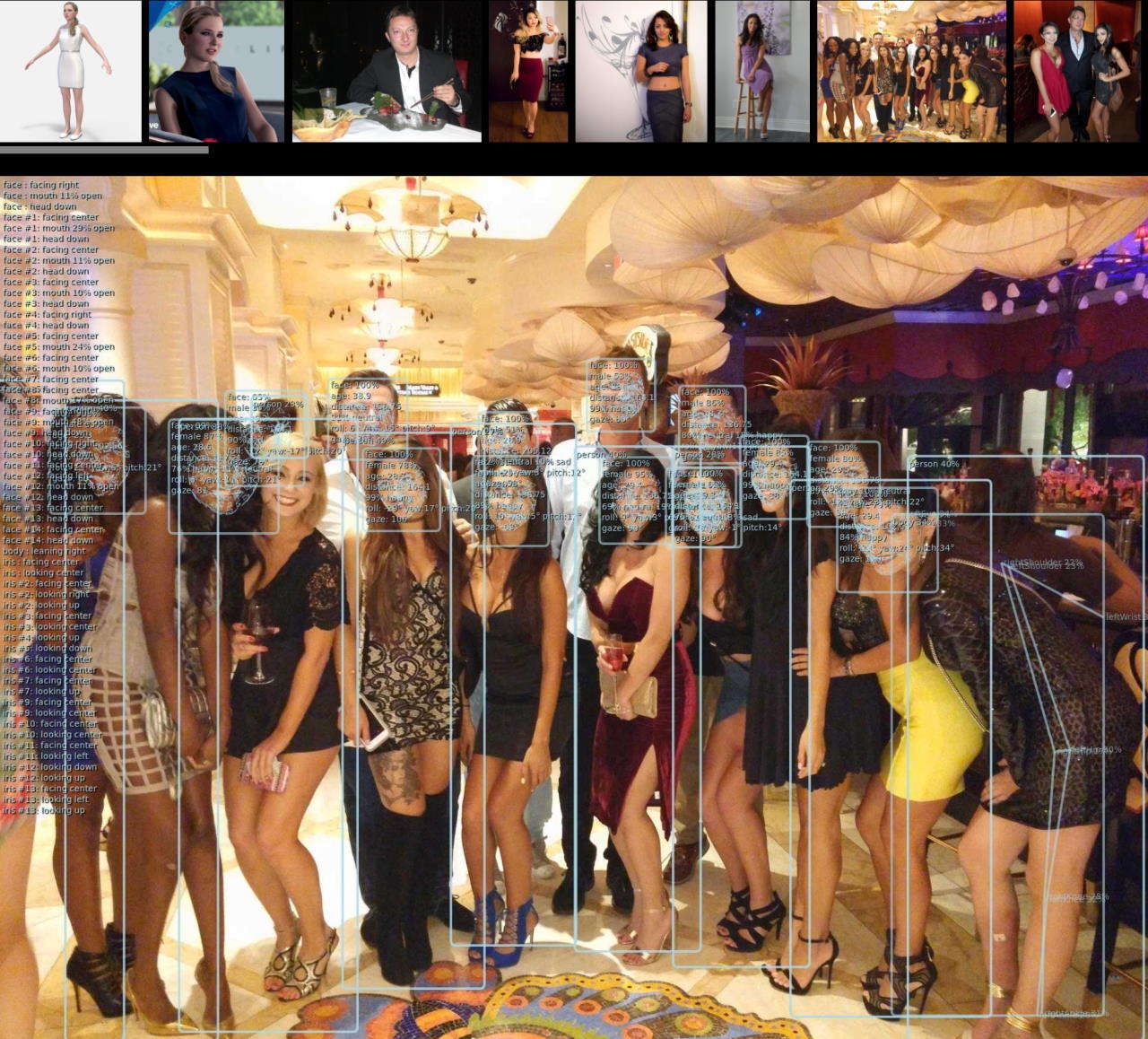

AI-powered 3D Face Detection & Rotation Tracking, Face Description & Recognition,

Body Pose Tracking, 3D Hand & Finger Tracking, Iris Analysis,

Age & Gender & Emotion Prediction, Gaze Tracking, Gesture Recognition, Body Segmentation

Highlights

- Compatible with most server-side and client-side environments and frameworks

- Combines multiple machine learning models which can be switched on-demand depending on the use-case

- Related models are executed in an attention pipeline to provide details when needed

- Optimized input pre-processing that can enhance image quality of any type of inputs

- Detection of frame changes to trigger only required models for improved performance

- Intelligent temporal interpolation to provide smooth results regardless of processing performance

- Simple unified API

- Built-in Image, Video and WebCam handling

Compatibility

Browser:

- Compatible with both desktop and mobile platforms

- Compatible with WebGPU, WebGL, WASM, CPU backends

- Compatible with WebWorker execution

- Compatible with WebView

- Primary platform: Chromium-based browsers

- Secondary platform: Firefox, Safari

NodeJS:

- Compatibile with WASM backend for executions on architectures where tensorflow binaries are not available

- Compatible with tfjs-node using software execution via tensorflow shared libraries

- Compatible with tfjs-node using GPU-accelerated execution via tensorflow shared libraries and nVidia CUDA

- Supported versions are from 14.x to 22.x

- NodeJS version 23.x is not supported due to breaking changes and issues with

@tensorflow/tfjs

Releases

- Release Notes

- NPM Link

Demos

Check out Simple Live Demo fully annotated app as a good start starting point (html)(code)

Check out Main Live Demo app for advanced processing of of webcam, video stream or images static images with all possible tunable options

- To start video detection, simply press Play

- To process images, simply drag & drop in your Browser window

- Note: For optimal performance, select only models you’d like to use

- Note: If you have modern GPU, WebGL (default) backend is preferred, otherwise select WASM backend

Browser Demos

All browser demos are self-contained without any external dependencies

- Full [Live] [Details]: Main browser demo app that showcases all Human capabilities

- Simple [Live] [Details]: Simple demo in WebCam processing demo in TypeScript

- Embedded [Live] [Details]: Even simpler demo with tiny code embedded in HTML file

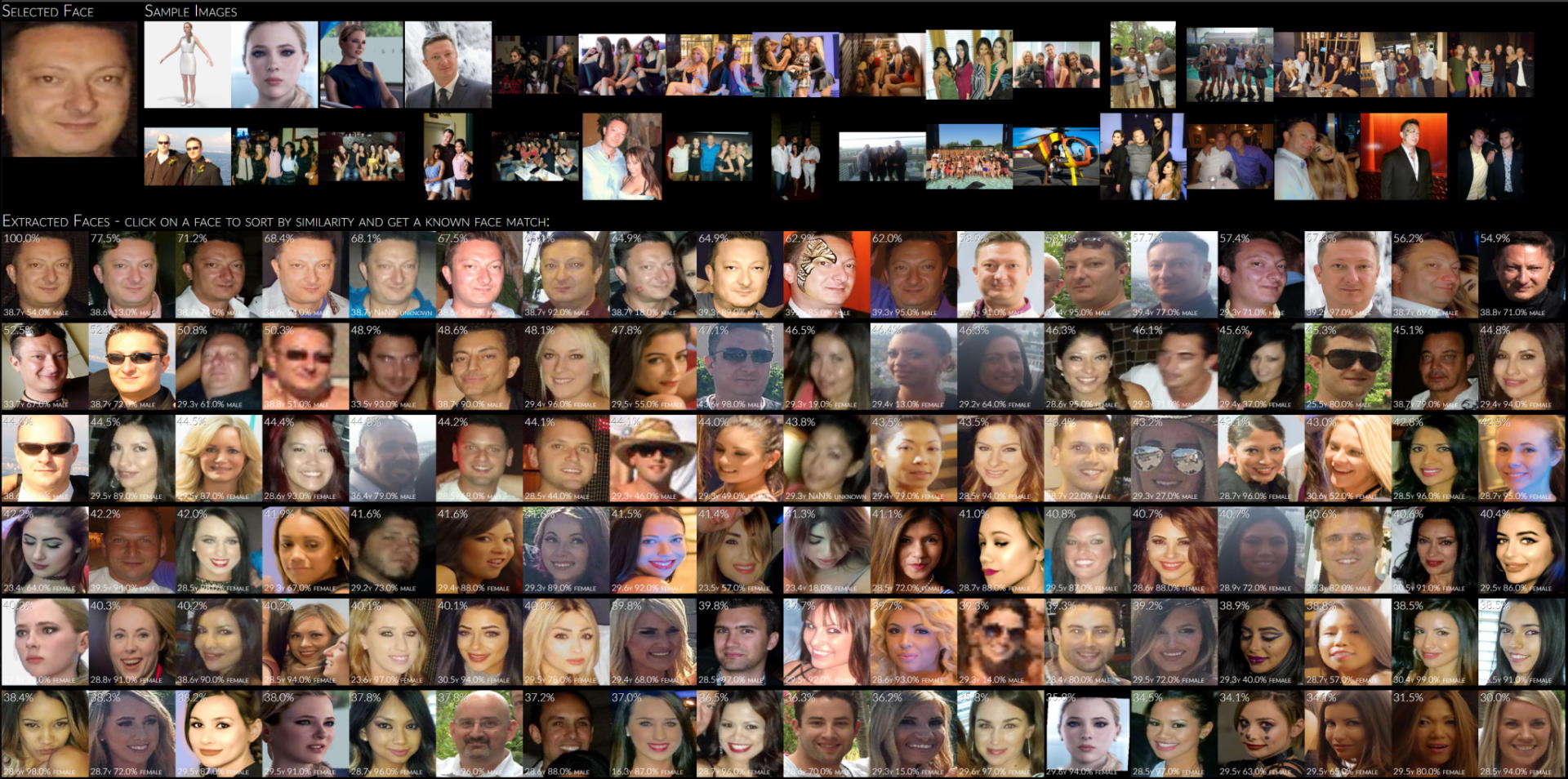

- Face Detect [Live] [Details]: Extract faces from images and processes details

- Face Match [Live] [Details]: Extract faces from images, calculates face descriptors and similarities and matches them to known database

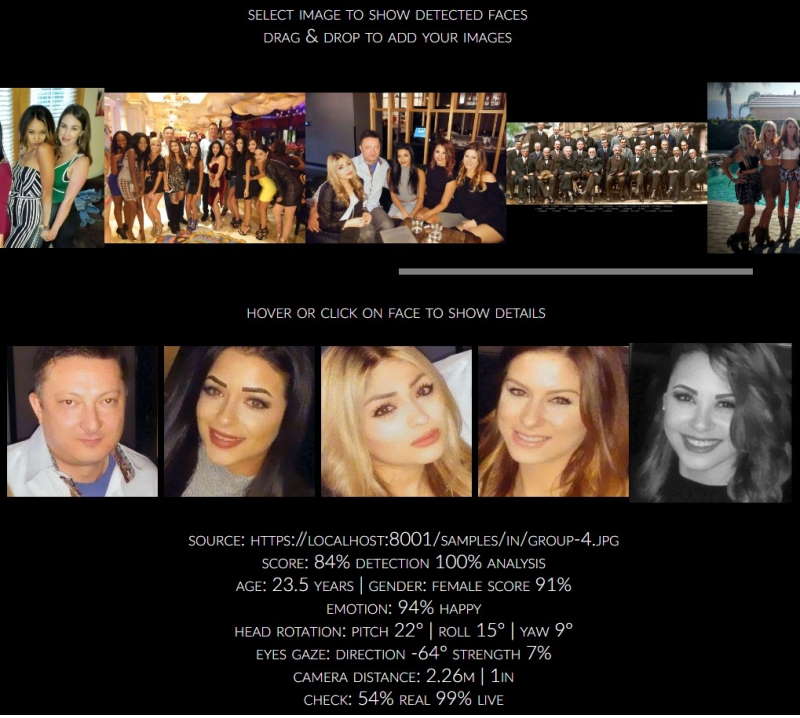

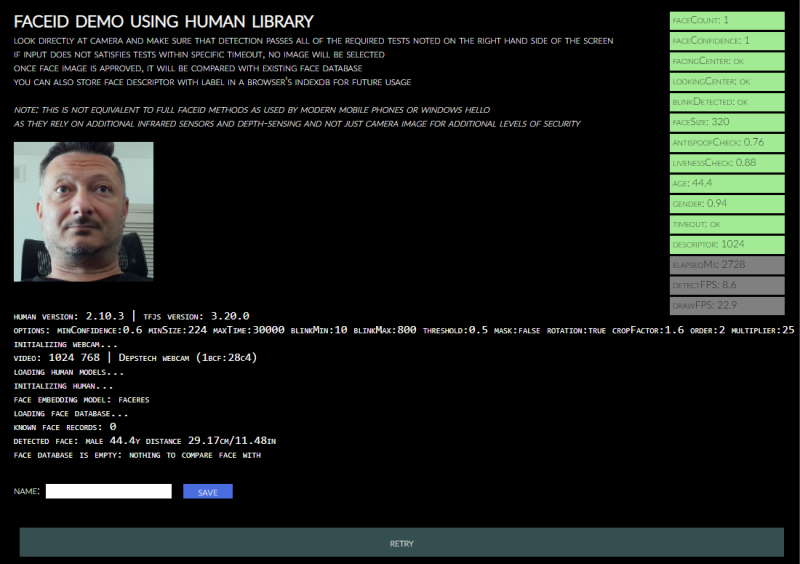

- Face ID [Live] [Details]: Runs multiple checks to validate webcam input before performing face match to faces in IndexDB

- Multi-thread [Live] [Details]: Runs each Human module in a separate web worker for highest possible performance

- NextJS [Live] [Details]: Use Human with TypeScript, NextJS and ReactJS

- ElectronJS [Details]: Use Human with TypeScript and ElectonJS to create standalone cross-platform apps

- 3D Analysis with BabylonJS [Live] [Details]: 3D tracking and visualization of head, face, eye, body and hand

- VRM Virtual Model Tracking with Three.JS [Live] [Details]: VR model with head, face, eye, body and hand tracking

- VRM Virtual Model Tracking with BabylonJS [Live] [Details]: VR model with head, face, eye, body and hand tracking

NodeJS Demos

NodeJS demos may require extra dependencies which are used to decode inputs

See header of each demo to see its dependencies as they are not automatically installed with Human

- Main [Details]: Process images from files, folders or URLs using native methods

- Canvas [Details]: Process image from file or URL and draw results to a new image file using

node-canvas - Video [Details]: Processing of video input using

ffmpeg - WebCam [Details]: Processing of webcam screenshots using

fswebcam - Events [Details]: Showcases usage of

Humaneventing to get notifications on processing - Similarity [Details]: Compares two input images for similarity of detected faces

- Face Match [Details]: Parallel processing of face match in multiple child worker threads

- Multiple Workers [Details]: Runs multiple parallel

humanby dispaching them to pool of pre-created worker processes - Dynamic Load [Details]: Loads Human dynamically with multiple different desired backends

Project pages

- Code Repository

- NPM Package

- Issues Tracker

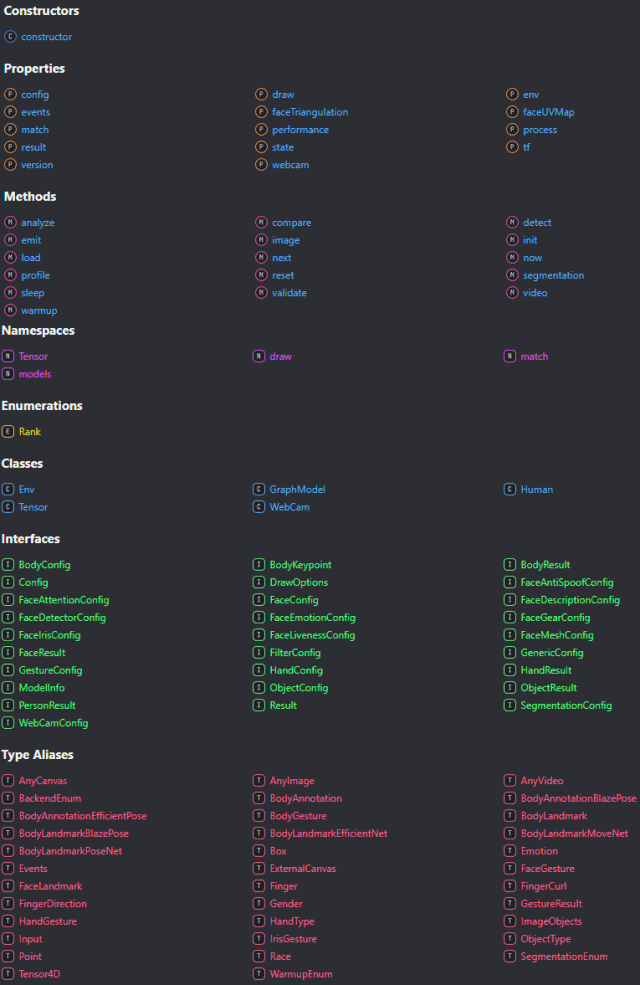

- TypeDoc API Specification - Main class

- TypeDoc API Specification - Full

- Change Log

- Current To-do List

Wiki pages

- Home

- Installation

- Usage & Functions

- Configuration Details

- Result Details

- Customizing Draw Methods

- Caching & Smoothing

- Input Processing

- Face Recognition & Face Description

- Gesture Recognition

- Common Issues

- Background and Benchmarks

Additional notes

- Comparing Backends

- Development Server

- Build Process

- Adding Custom Modules

- Performance Notes

- Performance Profiling

- Platform Support

- Diagnostic and Performance trace information

- Dockerize Human applications

- List of Models & Credits

- Models Download Repository

- Security & Privacy Policy

- License & Usage Restrictions

See issues and discussions for list of known limitations and planned enhancements

Suggestions are welcome!

App Examples

Visit Examples gallery for more examples

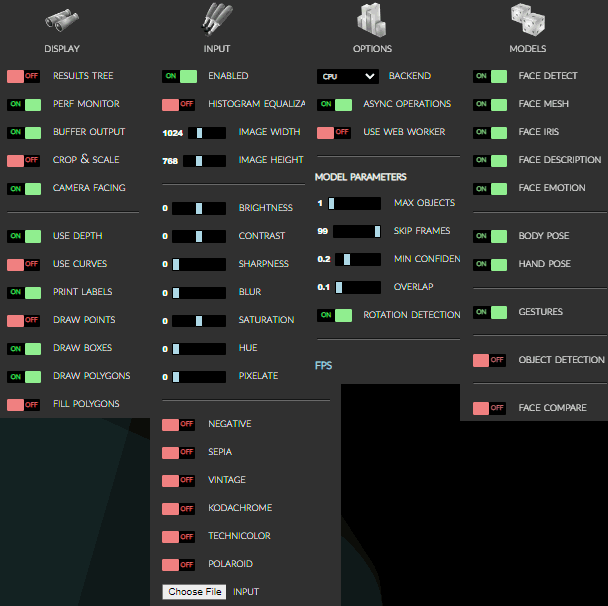

Options

All options as presented in the demo application…

demo/index.html

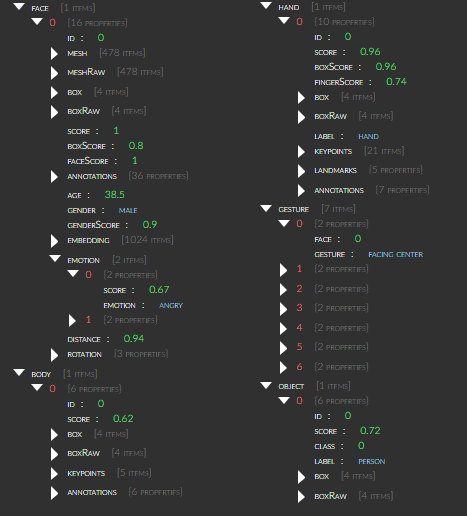

Results Browser:

[ Demo -> Display -> Show Results ]

Advanced Examples

- Face Similarity Matching:

Extracts all faces from provided input images,

sorts them by similarity to selected face

and optionally matches detected face with database of known people to guess their names

- Face Detect:

Extracts all detect faces from loaded images on-demand and highlights face details on a selected face

- Face ID:

Performs validation check on a webcam input to detect a real face and matches it to known faces stored in database

- 3D Rendering:

- VR Model Tracking:

- Human as OS native application:

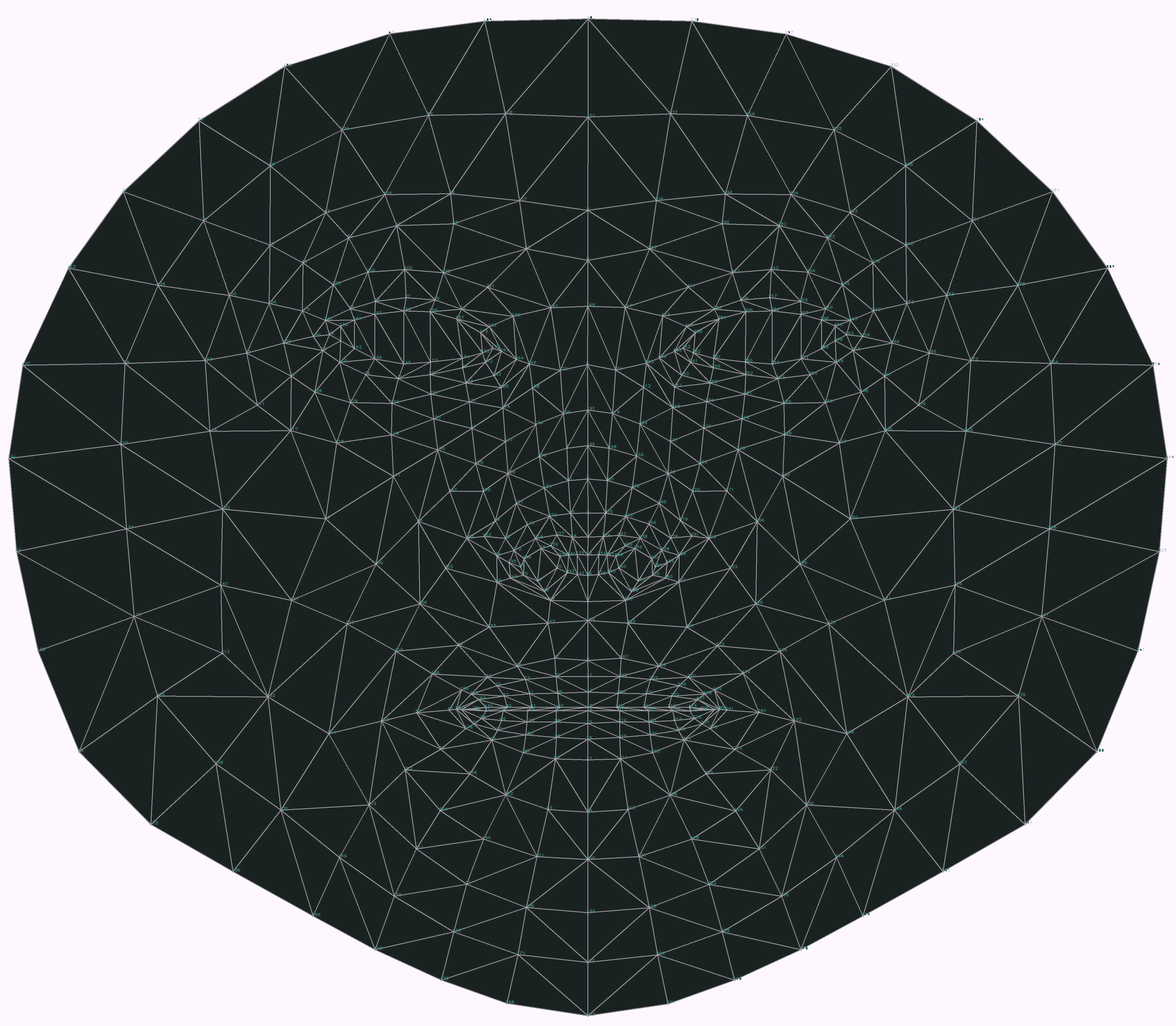

468-Point Face Mesh Defails:

(view in full resolution to see keypoints)

<hr>

Quick Start

Simply load Human (IIFE version) directly from a cloud CDN in your HTML file:

(pick one: jsdelirv, unpkg or cdnjs)

<!DOCTYPE HTML>

<script src="https://cdn.jsdelivr.net/npm/@vladmandic/human/dist/human.js"></script>

<script src="https://unpkg.dev/@vladmandic/human/dist/human.js"></script>

<script src="https://cdnjs.cloudflare.com/ajax/libs/human/3.0.0/human.js"></script>

For details, including how to use Browser ESM version or NodeJS version of Human, see Installation

Code Examples

Simple app that uses Human to process video input and

draw output on screen using internal draw helper functions

// create instance of human with simple configuration using default values

const config = { backend: 'webgl' };

const human = new Human.Human(config);

// select input HTMLVideoElement and output HTMLCanvasElement from page

const inputVideo = document.getElementById('video-id');

const outputCanvas = document.getElementById('canvas-id');

function detectVideo() {

// perform processing using default configuration

human.detect(inputVideo).then((result) => {

// result object will contain detected details

// as well as the processed canvas itself

// so lets first draw processed frame on canvas

human.draw.canvas(result.canvas, outputCanvas);

// then draw results on the same canvas

human.draw.face(outputCanvas, result.face);

human.draw.body(outputCanvas, result.body);

human.draw.hand(outputCanvas, result.hand);

human.draw.gesture(outputCanvas, result.gesture);

// and loop immediate to the next frame

requestAnimationFrame(detectVideo);

return result;

});

}

detectVideo();

or using async/await:

// create instance of human with simple configuration using default values

const config = { backend: 'webgl' };

const human = new Human(config); // create instance of Human

const inputVideo = document.getElementById('video-id');

const outputCanvas = document.getElementById('canvas-id');

async function detectVideo() {

const result = await human.detect(inputVideo); // run detection

human.draw.all(outputCanvas, result); // draw all results

requestAnimationFrame(detectVideo); // run loop

}

detectVideo(); // start loop

or using Events:

// create instance of human with simple configuration using default values

const config = { backend: 'webgl' };

const human = new Human(config); // create instance of Human

const inputVideo = document.getElementById('video-id');

const outputCanvas = document.getElementById('canvas-id');

human.events.addEventListener('detect', () => { // event gets triggered when detect is complete

human.draw.all(outputCanvas, human.result); // draw all results

});

function detectVideo() {

human.detect(inputVideo) // run detection

.then(() => requestAnimationFrame(detectVideo)); // upon detect complete start processing of the next frame

}

detectVideo(); // start loop

or using interpolated results for smooth video processing by separating detection and drawing loops:

const human = new Human(); // create instance of Human

const inputVideo = document.getElementById('video-id');

const outputCanvas = document.getElementById('canvas-id');

let result;

async function detectVideo() {

result = await human.detect(inputVideo); // run detection

requestAnimationFrame(detectVideo); // run detect loop

}

async function drawVideo() {

if (result) { // check if result is available

const interpolated = human.next(result); // get smoothened result using last-known results

human.draw.all(outputCanvas, interpolated); // draw the frame

}

requestAnimationFrame(drawVideo); // run draw loop

}

detectVideo(); // start detection loop

drawVideo(); // start draw loop

or same, but using built-in full video processing instead of running manual frame-by-frame loop:

const human = new Human(); // create instance of Human

const inputVideo = document.getElementById('video-id');

const outputCanvas = document.getElementById('canvas-id');

async function drawResults() {

const interpolated = human.next(); // get smoothened result using last-known results

human.draw.all(outputCanvas, interpolated); // draw the frame

requestAnimationFrame(drawResults); // run draw loop

}

human.video(inputVideo); // start detection loop which continously updates results

drawResults(); // start draw loop

or using built-in webcam helper methods that take care of video handling completely:

const human = new Human(); // create instance of Human

const outputCanvas = document.getElementById('canvas-id');

async function drawResults() {

const interpolated = human.next(); // get smoothened result using last-known results

human.draw.canvas(outputCanvas, human.webcam.element); // draw current webcam frame

human.draw.all(outputCanvas, interpolated); // draw the frame detectgion results

requestAnimationFrame(drawResults); // run draw loop

}

await human.webcam.start({ crop: true });

human.video(human.webcam.element); // start detection loop which continously updates results

drawResults(); // start draw loop

And for even better results, you can run detection in a separate web worker thread

<hr>

Inputs

Human library can process all known input types:

Image,ImageData,ImageBitmap,Canvas,OffscreenCanvas,Tensor,HTMLImageElement,HTMLCanvasElement,HTMLVideoElement,HTMLMediaElement

Additionally, HTMLVideoElement, HTMLMediaElement can be a standard <video> tag that links to:

- WebCam on user’s system

- Any supported video type

e.g..mp4,.avi, etc. - Additional video types supported via HTML5 Media Source Extensions

e.g.: HLS (HTTP Live Streaming) usinghls.jsor DASH (Dynamic Adaptive Streaming over HTTP) usingdash.js - WebRTC media track using built-in support

<hr>

Detailed Usage

- Wiki Home

- List of all available methods, properies and namespaces

- TypeDoc API Specification - Main class

-

TypeDoc API Specification - Full

<hr>

TypeDefs

Human is written using TypeScript strong typing and ships with full TypeDefs for all classes defined by the library bundled in types/human.d.ts and enabled by default

Note: This does not include embedded tfjs

If you want to use embedded tfjs inside Human (human.tf namespace) and still full typedefs, add this code:

import type * as tfjs from ‘@vladmandic/human/dist/tfjs.esm’;

const tf = human.tf as typeof tfjs;

This is not enabled by default as Human does not ship with full TFJS TypeDefs due to size considerations

Enabling tfjs TypeDefs as above creates additional project (dev-only as only types are required) dependencies as defined in @vladmandic/human/dist/tfjs.esm.d.ts:

@tensorflow/tfjs-core, @tensorflow/tfjs-converter, @tensorflow/tfjs-backend-wasm, @tensorflow/tfjs-backend-webgl

<hr>

Default models

Default models in Human library are:

- Face Detection: MediaPipe BlazeFace Back variation

- Face Mesh: MediaPipe FaceMesh

- Face Iris Analysis: MediaPipe Iris

- Face Description: HSE FaceRes

- Emotion Detection: Oarriaga Emotion

- Body Analysis: MoveNet Lightning variation

- Hand Analysis: HandTrack & MediaPipe HandLandmarks

- Body Segmentation: Google Selfie

- Object Detection: CenterNet with MobileNet v3

Note that alternative models are provided and can be enabled via configuration

For example, body pose detection by default uses MoveNet Lightning, but can be switched to MultiNet Thunder for higher precision or Multinet MultiPose for multi-person detection or even PoseNet, BlazePose or EfficientPose depending on the use case

For more info, see Configuration Details and List of Models

<hr>

Diagnostics

<hr>

Human library is written in TypeScript 5.1 using TensorFlow/JS 4.10 and conforming to latest JavaScript ECMAScript version 2022 standard

Build target for distributables is JavaScript EMCAScript version 2018

For details see Wiki Pages

and API Specification